Human bosses can be quite out of control and make everyone’s life miserable. But human jerks may pale in comparison to robot jerks who are likely to have the intelligence and technology to move “simple” humans out of the way, permanently.

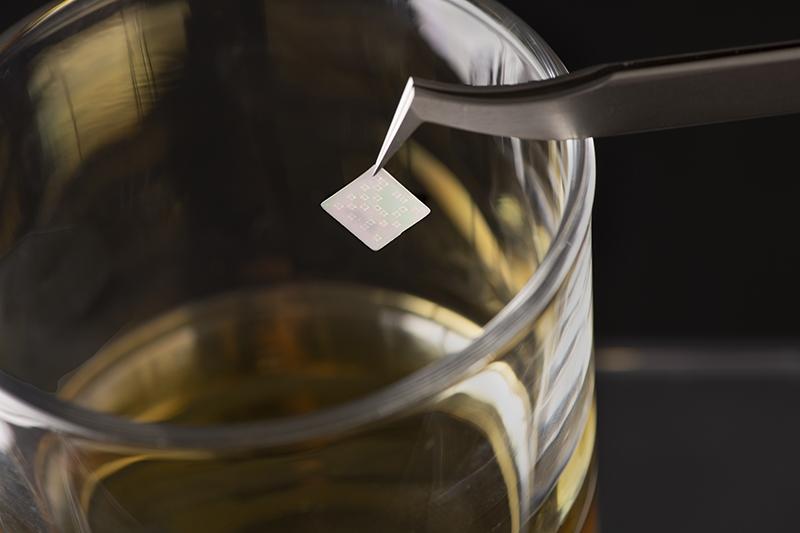

With current supercomputers capable of computing at a rate of 2,507 trillion times per second, it is likely that future robots, perhaps in the next decade, will carry around in their human-size heads even more power with the potential to create much more mayhem than the worst of human bosses.

The Risk to Humans of Advanced AI

A number of the world’s scientific and business leaders, including Stephen Hawking and Elon Musk, have signed a letter warning of the risks inherent in Artificial Intelligence (AI). Musk has gone so far as to contribute $10 million to the Future of Life Institute, which focuses on this matter.

Here’s the open letter. It is worth reading in full:

Research Priorities for Robust and Beneficial Artificial Intelligence: an Open Letter

Artificial intelligence (AI) research has explored a variety of problems and approaches since its inception, but for the last 20 years or so has been focused on the problems surrounding the construction of intelligent agents – systems that perceive and act in some environment. In this context, “intelligence” is related to statistical and economic notions of rationality – colloquially, the ability to make good decisions, plans, or inferences.The adoption of probabilistic and decision-theoretic representations and statistical learning methods has led to a large degree of integration and cross-fertilization among AI, machine learning, statistics, control theory, neuroscience, and other fields. The establishment of shared theoretical frameworks, combined with the availability of data and processing power, has yielded remarkable successes in various component tasks such as speech recognition, image classification, autonomous vehicles, machine translation, legged locomotion, and question-answering systems.

As capabilities in these areas and others cross the threshold from laboratory research to economically valuable technologies, a virtuous cycle takes hold whereby even small improvements in performance are worth large sums of money, prompting greater investments in research. There is now a broad consensus that AI research is progressing steadily, and that its impact on society is likely to increase.

The potential benefits are huge, since everything that civilization has to offer is a product of human intelligence; we cannot predict what we might achieve when this intelligence is magnified by the tools AI may provide, but the eradication of disease and poverty are not unfathomable. Because of the great potential of AI, it is important to research how to reap its benefits while avoiding potential pitfalls.

The progress in AI research makes it timely to focus research not only on making AI more capable, but also on maximizing the societal benefit of AI. Such considerations motivated the AAAI 2008-09 Presidential Panel on Long-Term AI Futures and other projects on AI impacts, and constitute a significant expansion of the field of AI itself, which up to now has focused largely on techniques that are neutral with respect to purpose.

We recommend expanded research aimed at ensuring that increasingly capable AI systems are robust and beneficial: our AI systems must do what we want them to do. The attached research priorities document gives many examples of such research directions that can help maximize the societal benefit of AI. This research is by necessity interdisciplinary, because it involves both society and AI. It ranges from economics, law and philosophy to computer security, formal methods and, of course, various branches of AI itself.

In summary, we believe that research on how to make AI systems robust and beneficial is both important and timely, and that there are concrete research directions that can be pursued today.

The following people make up the Future of Life Institute team:

- Jaan Tallinn

- Max Tegmark

- Viktoriya Krakovna

- Anthony Aguirre

- Meia Chita-Tegmark

- Back to Top

- Alan Alda

- Nick Bostrom

- Erik Brynjolfsson

- George Church

- Morgan Freeman

- Alan Guth

- Stephen Hawking

- Christof Koch

- Elon Musk

- Saul Perlmutter

- Martin Rees

- Francesca Rossi

- Stuart Russell

- Frank Wilczek

- Back to Top

- Riley Carney

- Ales Flidr

- Jesse Galef

- Eric Gastfriend

- Anders Huitfeldt

- Janos Kramar

- Richard Mallah

- Daniel R. Miller

- Chase Moores

- Robert Rhodes

Related aricles on IndustryTap:

- If You Ask Elon Musk… Artificial Intelligence Can Certainly Take Over Humans One Day

- Swiss ABB Makes Strategic Investment In Artificial Intelligence & Machine Learning

- Robot Uprisings? Will Computers With Human-Like Intelligence Control Our Future?

References and related content: