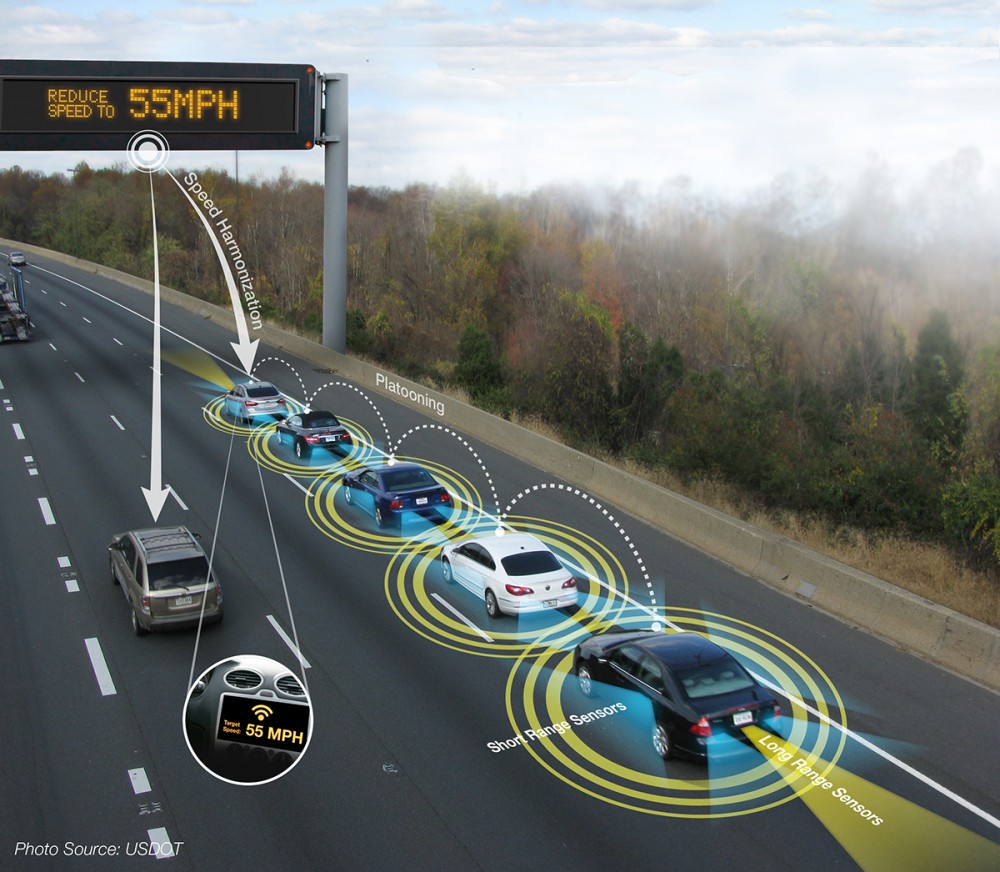

The advantages and disadvantages of self-driving cars are pretty evident to most. Many of us cherish the idea of taking cat naps on medium to long range trips, reading or doing paperwork so that a ride in a car can be a productive experience.

But while all of us can imagine the upsides, it’s perhaps easier to imagine the downsides and nightmares that mostly involve accidents. After seeing someone die in a taxi accident in New York City in the 1980s, I swore that I would never die that way. And yet here I find myself intrigued by the possibility of a driverless chauffeur at my beck and call.

To waylay our fears, the US government’s National Highway Traffic Safety Administration (NHTSA) has come up with a 15-point federal checklist for self-driving cars to promote safety. But my imagination produces the image of a hungover, tired, hungry, lovelorn, suicidal computer programmer “taking his eye off the ball” as he’s writing a critical line of code that will lead to my violent and bloody death. Instead of “turn right” to avoid an object, his code will be “turn left, ” and that will be the end of me.

And yet, the government must do what the government must do, and it has come up with a 15-point checklist to try to make self-driving cars safe. Here is a summary:

- Data sharing – Carmakers need to collect information and make it available so that regulators can look out for the best interests of the public by reconstructing what has gone wrong in the event of crashes and system breakdowns.

- Privacy – Whatever data is collected should be kept private.

- System safety – Software malfunctions would seem to be inevitable, and so self-driving car systems must be made with backup systems

- Digital security – Driverless cars should be secure and protected from “joyriding,” i.e. being taken over by hackers and crashed.

- Human-machine interface – Vehicles should be able to switch between autopilot and human control.

- Crashworthiness – Driverless cars must meet national regulatory standards for crashworthiness so that occupants are protected when crashes do occur.

- Consumer education – Automakers must make sure consumers are knowledgeable of and capable of using driverless vehicles and that they understand their capabilities and limitations.

- Certification – Software that is to be used with driverless vehicles must be approved by the National Highway Transportation Safety Administration (NHTSA)

- Post-Crash behavior – If an accident has occurred, automakers must pinpoint the cause and prove their cars are safe to use again after a crash.

- Laws and practices – Driverless vehicles are required to follow state and local laws and practices related to driving, including speed limits, U-turns, right turn behavior, and red lights.

- Ethical considerations – Human drivers must often make ethical decisions when driving. With driverless cars, what types of ethical decisions can be made by computer?

- Operational design – Manufacturers of driverless vehicles must prove their vehicles have been tested and validated to meet various driving situations and conditions including driving at night, on dirt roads, etc.

- Detection and response – Automakers must show that their driverless cars respond properly to other cars, pedestrians, animals, falling trees, etc.

- Fallback – When a malfunction is detected in a vehicle there should be a smooth fallback from automated driving to human control, if necessary.

- Validation – Automakers must develop testing and validation criteria that account for a wide range of technologies that are expected to be used in driverless cars and testing should include simulations, test track driving, and on-road testing.